Getting down to Whitestown, Ind., yesterday took about 4 hours and 45 minutes, including a stop to empty Cassie, which isn't great but isn't nearly as bad as I'd feared. Getting home, however, taught me about the limitations of Google Maps in a way I'm not likely to forget.

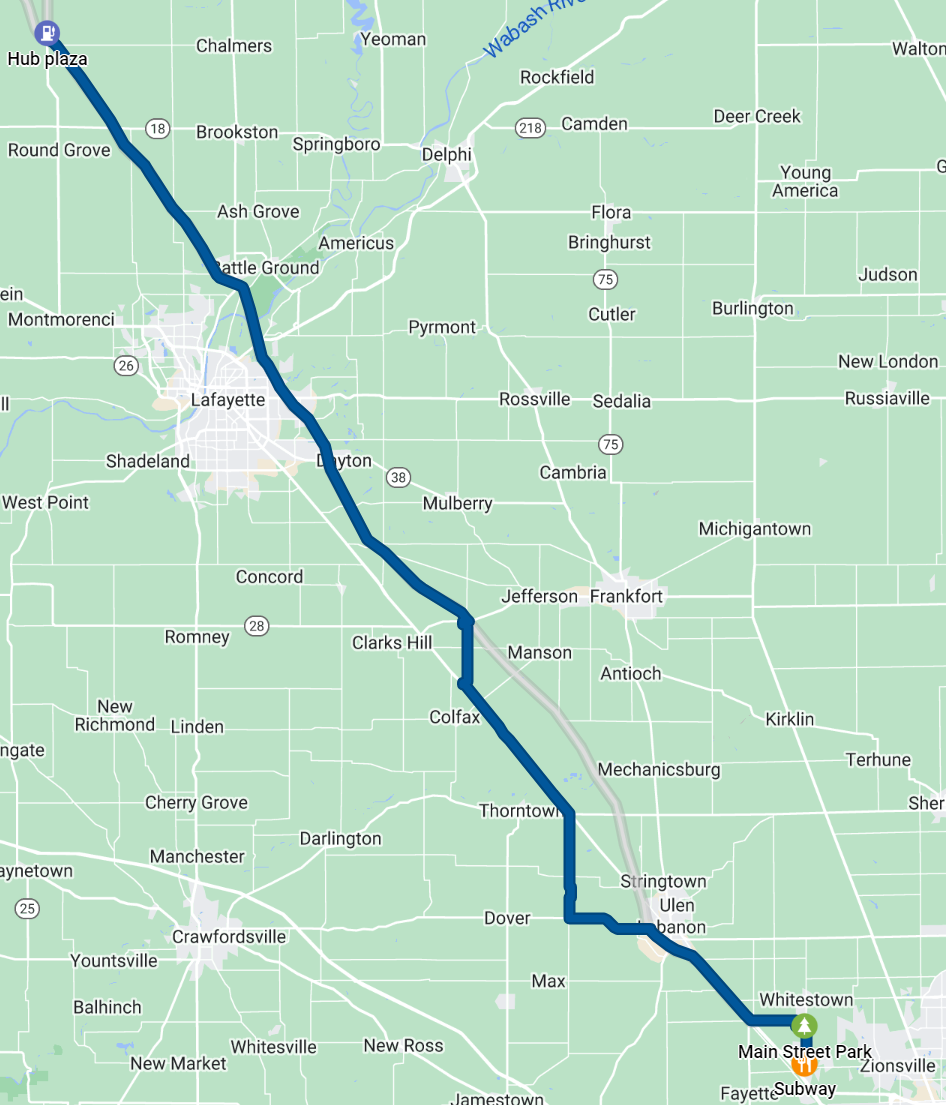

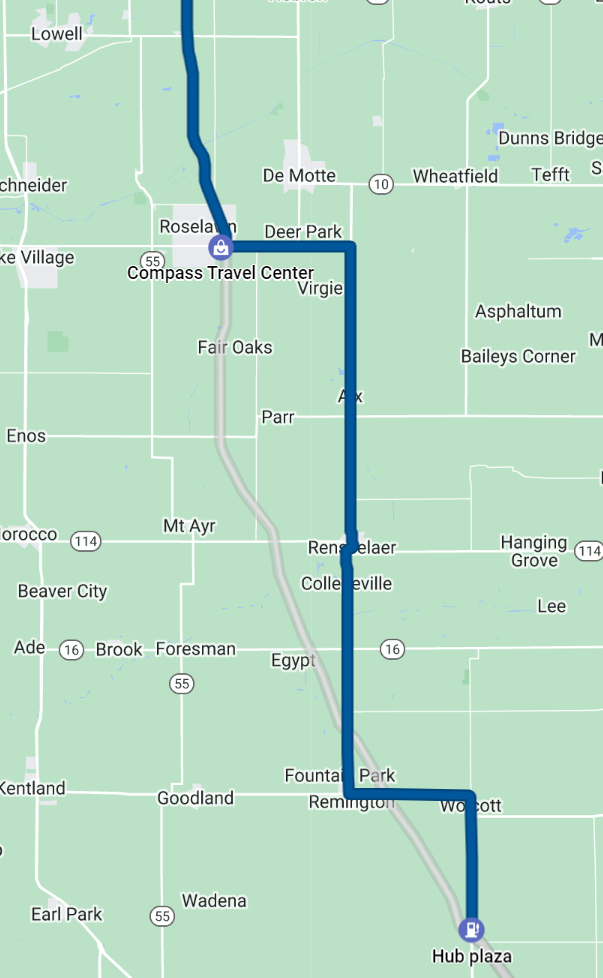

Here's the first leg of our return trip, from Whitestown to just south of Wolcott, Ind., a distance of 109 km:

That took us 3 hours and 41 minutes, an average of 29.6 km/h. People ride bikes faster than that.

You can see spots where we got off Interstate 65 and followed Google's instructions to take alternate roads, because I-65 had an average speed of a portly beagle. (I'm not making up the comparison. I'm talking about a specific beagle.)

As it turned out, though, Google had no data at all about the alternate roads until people started driving on them. So when Google said "take County Road 50 N to County Road 500 W" because it thought no one was on those roads, that was true until Google told 300 people to take them.

That made getting back on I-65 a new kind of hell as stoplights set up to admit the 2 or 3 cars usually going through an intersection completely failed to clear the 5-km line of cars trying to turn.

We finally learned our lesson, too late, after we gave up on Google Maps and lit out on US-231 towards Wolcott. From that point until we got onto the Dan Ryan expressway in Chicago we averaged about 90 km/h. We ignored Google and paralleled I-65 until it looked like the Interstate had finally cleared up.

The other thing we learned was, if there's a 40-minute line for the bathroom, leave. We found a couple of gas stations with no lines just 5 minutes from "Hub Plaza" on the map above.

And as a bonus, we got to see a magnificent sunset over the fields of central Indiana that we would not have seen from the Interstate.

The total return time from Whitesville to Chicago was 6 hours and 48 minutes.

Next time I travel through rural parts of the US, I'm going to go back to the navigation skills I learned before we had satnav in every car.

One more thing: if the US had the same level of technology and similar transport policies as our peer nations (not to mention China), I would simply have gotten on a high-speed train in downtown Chicago and gotten out in Indianapolis 90 minutes later. Alas, American transportation is still stuck in the mid-1900s, with no likelihood of advancing—especially in a reactionary state like Indiana.

But just to be clear: it was totally worth it. There is nothing like seeing a total solar eclipse. I'm already thinking about going to Spain in 2026.