My house looks weird

A photographer is coming to my house tomorrow so we can list it on Tuesday. To prepare for this, I've removed about 30 boxes of stuff from the house, mostly books and movies, plus some bookcases and other furniture. Just now after lunch, I removed or put away most of the things I use daily in the kitchen and took everything off the fridge. The house looks great for real-estate photos but it doesn't really feel like my house anymore. Even my normally-cluttered office looks spartan.

Meanwhile:

- Defense Secretary Pete "Cushions" Hegseth does not like unflattering photos of himself, though somehow he doesn't mind when reporters publish the actual words he says out loud.

- Speaking of stupidity, the Defense Department continues its war on intelligence, both the human and artificial kinds.

- They also cut the Civilian Protection Center of Excellence, an internal DoD office tasked with limiting civilian casualties and avoiding targeting mistakes like the one that killed over 100 schoolgirls on February 28th.

- Matthew Yglesias understands that no one wants a quagmire in Iran, but can't see how the war we started there will go any other way.

- Adam Kinzinger wants to know why the department spent $15.1 million on ribeye steak last September while cutting the $20 million required to keep our RC-26B counter-terrorism aircraft flying.

Sorry, I hadn't planned on highlighting just how idiotic our Defense Department had gotten under Hegseth. Here, let's look at two more stories that have nothing to do with how badly our government is run under this administration:

- Two really good breweries, Half Acre and Maplewood, have announced they are merging to reduce materials costs and "amplify founder-led brands."

- The New York Times Magazine explores the world of "Sync Music," which you hear all the time and have no idea why.

OK, back to the mines. Which are eerily uncluttered and depersonalized this afternoon...

Pinned posts

- About this Blog (v5.0) (2 weeks)

- Brews and Choos project (2 months)

- Chicago sunrises, 2026 (2 months)

- Inner Drive Technology's computer history (2 years)

- Logical fallacies (6 years)

- Other people's writing (5 years)

- Parker Braverman, 2006-2020 (5 years)

- Style sheet (8 months)

- Where I get my corn-pone (1 month)

A monopoly on competence

We are being governed by unserious people.

The OAFPOTUS's demented adventure in Iran has exposed for people, including those who otherwise wouldn't care, that the Republican Party has given up any pretense of competent governance. They acted out a pantomime of competence for about 30 years, starting when Newt Gingrich shoved the party hard to the loony right in 1994, and even managed to cover it up with a sheet of tracing paper during the OAFPOTUS's first term. Alas, in the last 14 months it has broken free like the chest-bursting end to Kane at breakfast. Just as many of us predicted.

Michael Tomasky enumerates "how we're all paying the price for the myth of Trump's competence:"

We’ve seen numerous examples in these last 13 months of Trump’s mendacity and malevolence. Unfortunately, a lot of Americans will never see him that way. There are those who adore him unconditionally, but beyond these dead-enders, there are others who know he’s not a good person but aren’t all that bothered by it.

That’s hard for millions of us to accept. But I hope to God that these people are finally starting to move themselves toward the conclusion that, even if they aren’t that troubled by the mendacity and malevolence, the man is just wildly incompetent. A mountain range of mythmaking has gone into creating the Trump persona over the years; by him, by a pliant business press in his real estate days, and, since he entered politics, by a right-wing media that would make the old Soviet press agencies blush and a party of cowardly sycophants, most of whom know very well that he shouldn’t be in charge of a high-volume McDonald’s, let alone the executive branch of the federal government, but would rather let the country collapse than say so.

James Fallows, who worked in the Carter Administration during the Iranian Revolution of 1979, and who has written books about Iran and the defense of the US, smacks the OAFPOTUS for "the arrogance of ignorance:"

This has seemed in a way worse than the immediate aftermath of the 9/11 attacks. Or of the multiple horrific assassinations of the 1960s. Those previous tragedies were things done to America. Now the people at the controls have taken every hard-earned lesson about war and peace and set it ablaze.

The strongest leaders in our history have projected strength through control and calm. Think of John F. Kennedy, during the world’s closest brush with nuclear annihilation, the Cuban Missile Crisis, in 1962. Think of Martin Luther King Jr’s bearing, as his influence expanded and the mortal threats to him increased.

Then think of the clowns and posturers who now have the controls. They don’t know what they don’t know. They have no idea what they are unleashing. It took years for the United States to get into its quagmire in Vietnam. It took many months to prepare the groundwork for the disaster in Iraq.

These people have changed the world, for the worse, in just nine days. And none of us knows how it will end.

Historian Francis Fukuyama reminds us that "expecting Iran to unconditionally surrender is a fool's errand:"

When Trump launched the attack with Prime Minister Netanyahu of Israel, he was obviously hoping for a quick victory, something like the outcome he achieved when he snatched Nicolás Maduro of Venezuela in January. But the war expanded across the Middle East, with Iran shooting missiles and drones at American allies and bases all over the Persian Gulf. It was clear that what remained of the Iranian leadership was not about to capitulate, and that the conflict could drag on—as Trump himself admitted—for weeks.

Normally, a smart leader in such a situation would try to lower expectations and declare an achievable objective in the war, such as degrading the better part of Iran’s ability to strike targets with ballistic missiles and drones. This would offer an opportunity for Trump to declare victory and disengage. Instead, Trump did the opposite.

Iran ... is a very big country, and has a lot of places for the surviving regime to hide. It will not be possible to eliminate every missile and drone under their control, so we can expect continuing attacks on U.S.-aligned Gulf states and American facilities into the foreseeable future. The threat of a random drone striking the big airline hubs in the Gulf will be economically very damaging.

[D]emanding unconditional surrender was a very foolish thing for the president to do. I’m tempted to believe that Trump just liked the sound of the words, without thinking through the ways in which they could come back to haunt him. But this was only one poor decision among many. The most serious was the decision to go to war in the first place without a clear rationale for doing so.

Iraq War veteran and former Republican US Representative Adam Kinzinger, saddened by his party's implosion into a sad cult, takes umbrage with the meme-ification of the war:

There’s something deeply unsettling about watching the official social media accounts of the White House turn war into entertainment.

Leadership—especially civilian leadership—has a completely different responsibility. Their job is not to hype the fight. Their job is to weigh the consequences of it.

They are supposed to be thinking about diplomacy. Alliances. Long-term strategy. Civilian casualties. Escalation risks. The stability of the region. The lives of American service members who may be asked to execute the orders they give. And they are supposed to communicate the gravity of those decisions to the American people.

Instead, what we’re getting looks like a social media account run by someone trying to win the internet for the day.

If the United States is going to commit military power against a country like Iran, the American people deserve more than memes and shifting talking points. They deserve seriousness. They deserve honesty. And above all, they deserve a president who understands that war isn’t content.

It’s responsibility.

Glenn Kessler weighs the cost of never admitting error:

Video evidence examined by Bellingcat, The New York Times, The Washington Post, The Associated Press, and other news outlets leaves little doubt that a Tomahawk cruise missile — used only by the United States in the conflict — struck an Islamic Revolutionary Guard Corps base next to an elementary school that was hit at about the same moment, killing mostly children, according to Iranian media reports.

On Monday, Trump again suggested that Iran — or some other country — was responsible.

As U.S. and Israeli missiles continue to rain on Iran’s cities, it’s imperative that any further tragic accidents are thoroughly investigated and the facts made public. Trump often denies reality, but a little humility goes a long way in the court of world opinion.

Getting back to my main point: the OAFPOTUS and his droogs have taken these wildly incompetent actions because the OAFPOTUS and his droogs are wildly incompetent. The apotheosis of this abjectly stupid presidency is a world in which the Democratic Party monopolizes competence at the Federal level. The MAGA cult continues to drive the Party's remaining competence out of state governments, showing that the Party's elites have also lost their minds.

But here we are: in a brand-new land war in Asia, with no rationale for being there and no plausible way to exit gracefully, because the majority party in Congress who also control the presidency and the Supreme Court have an average IQ below the mean and an average maturity level of 7th grade.

It's time to take back the government of the US and put these clowns in a time-out at least until they can demonstrate enough competence and seriousness to run a lemonade stand. I give them about 40 years. Or better yet: throw the Republican Party into the landfill of history, along with the Know-Nothings and other stupid parties whence they came. Let Kinzinger and others like him form a competent center-right party (like Germany's Christian Democrats or Australia's Labour), and let's return to a back-and-forth of ideas about how to govern this country instead of robbing it.

Quick lunchtime links

Spot the thread:

- The OAFPOTUS's adventures in Iran have caused wild gyrations in the oil markets with more expected as the war continues.

- This, in turn, will lead to higher airfares—and soon.

- Will the administration's efforts to increase US oil production help? They will not.

- Climate scientists believe that a "super" El Niño currently forming in the Pacific Ocean could push temperatures to record levels over the next 12-18 months. Because, you will remember, burning oil raises sea surface temperatures.

Unrelated to all of that, the Writers Guild of America released its annual State of the Industry report yesterday. With the entertainment industry contracted, and writers—you know, the people without whom you wouldn't have your entertainment—getting paid less, why are the studios making bank? (Hint: oligopoly.)

The temperature at O'Hare yesterday hit 22.8°C, beating the previous record high by...

(Well, that's interesting. The National Weather Service seems to have removed their Chicago daily temperature records pages. Fortunately the National Centers for Environmental Information has a tool to find the previous record, but this is just another annoying effect of the OAFPOTUS's wanton destruction of our government. I suppose I should build out the climate features on Weather Now before the data goes away entirely...)

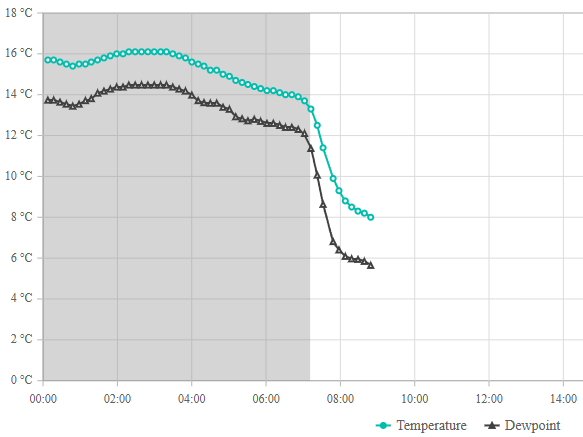

Anyway, the previous record was 20.6°C set in 2021, nearly tied by last year's 20°C. Flash forward 18 hours and it looks like a cold front has just passed through:

Cassie and I started her morning walk just after 7:30, when the temperature was still 11°C. It was 9°C when we got home 20 minutes later. And now it's around 8°C.

Other than the war with Iran that the OAFPOTUS and his droogs started a week ago, what on earth could have caused this?

Anne Applebaum grits her teeth:

I don’t know what the White House expected when US and Israeli forces began bombarding Iran, but they seem not to have anticipated the global impact of their actions. The Iranian regime did not collapse quickly. Iranian drones and missiles his US bases and consultates, and also damaged civilian targets across the Gulf States, including luxury hotels, airports and energy infrastructure. The result: Hundreds of thousands of tourists and travellers got stuck because of closed airspace. Oil prices skyrocketed upwards. The gas market is in chaos, as the Qatari refinery that produces one-fifth of the world’s supply went offline.

The Chinese and the North Koreans are also watching as American air defenses are used up, and as American allies back away, and making calculations accordingly. So are the Japanese, the Taiwanese and the South Koreans. In the coming weeks, the war in Iran will shape decisions made all across Asia, and around the world. Nobody in the US administration seems to have given much thought to mitigating this kind of collateral damage, let alone the deaths and suffering of civilians in Iran. The attitude is rather let’s drop the bombs and then see what happens.

The war in Iran has profound implications for American politics too. ... Americans should be profoundly concerned about this administration’s inability to explain why the war began, and what the endgame is supposed to be. I hope it ends with a better government in Iran, but so far, again, there is no evidence that either the White House or the Israelis have thought much about that. Instead, the goals and purpose keep changing. Congressional Republicans refuse to exercise any oversight. The public is kept in the dark about important aspects of the war. The secrecy and the dissembling are features of warfare more common to dictatorships than to democracies, where public support is normally needed before a government conducts a war as broad and expensive as this one.

It doesn't help that the OAFPOTUS is an 80-year-old with a family history of Alzheimer's who exhibits symptoms of advanced frontotemporal dementia.

He keeps losing and lashes out by going bigger when he does. How many wars will we fight without any strategy or benefit to the United States before Congress pulls him out of office? I'm afraid we're going to find out.

We got almost up to 16°C today before a cold front pushed through around noon, so the temperature has already fallen to 11°C on its way down to 6°C by sunset.

And then, the rest of the week, we'll bounce around between 20°C with sun on Monday and 4°C with rain on Tuesday.

March always gives us a fun ride. At least the sun is coming out behind the cold front...

On 5 March 2001, Federal judge Marilyn Patel (ND-CA) ordered music-sharing service Napster to remove the copyrighted materials listed in A&M Records' complaint against the service. This effectively killed Napster:

At the peak of Napster’s popularity in late 2000 and early 2001, some 60 million users around the world were freely exchanging digital mp3 files with the help of the program developed by Northeastern University college student Shawn Fanning in the summer of 1999. Radiohead? Robert Johnson? The Runaways? Metallica? Nearly all of their music was right at your fingertips, and free for the taking. Which, of course, was a problem for the bands, like Metallica, which after discovering their song “I Disappear” circulating through Napster prior to its official release, filed suit against the company, alleging “vicarious copyright infringement” under the U.S. Digital Millennium Copyright Act of 1996. Hip-hop artist Dr. Dre soon did the same, but the case that eventually brought Napster down was the $20 billion infringement case filed by the Recording Industry Association of America (RIAA).

That case—A&M Records, Inc. v. Napster, Inc—wended its way through the courts over the course of 2000 and early 2001 before being decided in favor of the RIAA on February 12, 2001. The decision by the United States Court of Appeals for the Ninth Circuit rejected Napster’s claims of fair use, as well as its call for the court to institute a payment system that would have compensated the record labels while allowing Napster to stay in business.

[On] March 6, 2001, Napster, Inc. began the process of complying with Judge Patel’s order. Though the company would attempt to stay afloat, it shut down its service just three months later, having begun the process of dismantling itself on this day in 2001.

Flash forward 25 years, and training large language models with copyrighted material has created new legal problems for authors and users alike.

Homeland Security Secretary Kristi Noem is out:

President Trump fired his embattled homeland security secretary, Kristi Noem, on Thursday and announced plans to replace her with Senator Markwayne Mullin of Oklahoma, after she was grilled by Republican lawmakers this week at congressional hearings on a variety of topics, including her knowledge of a lucrative advertising contract.

Mr. Trump announced the change on social media, along with a new, and previously nonexistent, role for Ms. Noem: special envoy for the Shield of the Americas, which he said would be a new security initiative for the Western Hemisphere.

According to news reports, the OAFPOTUS has nominated US Senator Markwayne Mullin (R-OK) to replace Noem, but with the Senate currently shut down by a debate about funding DHS in the first place, it could be a while before they confirm Mullin.

Sure happy it's Thursday, early March edition

It must be lunchtime:

- Josh Marshall takes a hard look at why ships don't want to traverse the Strait of Hormuz.

- Fully 97% of the 35,000 public comments submitted to the National Capitol Planning Commission about the OAFPOTUS's ballroom are negative.

- Speaking of planning, or lack thereof, Jeff Maurer quotes Jurassic Park to explain how we have no idea what will happen in Iran, but it probably won't be good for us.

Finally, Jaci Clement at the Fair Media Council has some quick tips on how to evaluate a news article in less than 10 seconds. For instance: "First name + last initial byline? Skip it. Credible journalism requires accountability."

Enjoy your Thursday. Tomorrow, Chicago could hit 22°C. Won't that be nice.

The Times had some (not at all) surprising news yesterday:

Studies show that having a pet is associated with lower blood pressure, a reduced risk of cardiovascular disease, and lower rates of death after a heart attack or stroke. And a large review of studies published in 2019 found that owning a dog was associated with a 24 percent lower risk of dying from all causes over the course of 10 years.

The benefit is so striking when it comes to heart health that the American Heart Association even has a scientific statement devoted to it, declaring that dog ownership “may be reasonable for reduction in cardiovascular disease risk.” (The organization doesn’t advise getting a dog for the sole purpose of heart health, though.)

Experts think one potential explanation for the health benefits is that people who own dogs tend to be more physically active than those who don’t.

Or the health benefits of pet ownership may simply be an effect of demographics. Dog owners tend to be younger and richer than non-owners, characteristics that correspond with better health. In one large meta-analysis, when things like age, income and health behaviors such as smoking were factored into the statistical analyses, many health benefits of dog ownership disappeared.

So, don't smoke and adopt a dog. It worked for me! (And for Cassie.)

Day 5 of not knowing WTF we're doing in Iran

I saw a stat on social media that turned out to be true: at its worst, in early 1973, 29% of the US approved of the Vietnam War. But only 27% approve of what we're doing in Iran—or at least, they did on Sunday. Of course, an argument to popularity isn't a valid argument, so let's look at the evidence:

- Part of how this started as the least popular war since polling began: this is not what the OAFPOTUS promised his MAGA stooges, a betrayal that has finally dislodged a handful of them from the cult.

- Because the OAFPOTUS and his droogs lack forethought, planning, competence, and intelligence (the cerebral kind), about 10,000 Americans are stranded in the region.

- This may have something to do with the three major Arabian peninsula airlines (Qatar, Emirates, and Etihad) cancelling 94% of their scheduled operations since Sunday, with all operations in and out of Bahrain halted.

- The war has also halted sea traffic after Iran did what everyone with a triple-digit IQ predicted and blockaded the Strait of Hormuz, causing yet another economic shock that our economy will have to reckon with in short order.

- Not yet a week in, and even conservatives see the OAFPOTUS's hubris, as happens to most ego-driven would-be conquerors over time.

- And let's pause for a moment at how absolutely out of his depth

Jerry LundegaardDefense Secretary Pete Hegseth appears in all this. (Of course, as Maurer says, "Hegseth, Rubio, et. al. can’t be fully candid about our objectives, because the real objective — glorify Trump — is not something the administration can admit.") - What about the Iranian people? Need we even ask?

The only bright spots in the last 24 hours are the election results in Texas, North Carolina, and Virginia, which suggest that the general election in 243 days will go about as well for the Republican Party as this needless and stupid war is going for the UAE, and for exactly the same reasons.

I've got a lot of plates spinning at the ends of sticks for the next couple of days, including figuring out who to vote for in the Illinois Primary Election two weeks from today. Since I won't be in Chicago on election day, I've got to do this by next Friday at the latest. The other spinning plates are a bit bigger.

Instead, today I want to mention that the Federal Aviation Administration has scheduled a meeting for tomorrow with American and United, because the two carriers have way too many operations scheduled at O'Hare this summer. Cranky Flyer explains:

The basic point of the meeting is to figure out how to come up with a schedule that can actually be operated. As the FAA notes, peak days have more than 3,080 daily operations this year compared to only 2,680 last summer. That is not sustainable. This has been an open secret for months now in the wake of American and United bulking up their operations. There was never any chance that all of these flights could operate without serious operational problems. Now the FAA has to figure out how it wants to implement this.

Of course, we don’t know how the FAA will look at this. Will it agree to reductions based on what’s filed? Or will it look at what flew last year, or perhaps pre-pandemic? Chances are American will argue for going back as far as possible while United wants to use this summer. We will know more soon.

Regardless of what happens, United is likely going to still have a very substantial number of flights compared to American when all is said and done. And that had to be the airline’s ultimate goal.

In theory, all the extra departures should be good for travelers' wallets and bad for their experience getting into or out of O'Hare. I have no flights planned for the summer, though I am planning to plan at least four. I'm curious to see how tomorrow's meeting affects those plans.

Ah, you fool! You fell victim to one of the classic blunders! The most famous is "never get involved in a land war in Asia."

Every Republican administration in this century has gotten us into land wars in Asia, and every Democratic administration has pulled out of them. Seeing a pattern? And yet, this one has got to be the dumbest one yet. No one knows what our goals are, apparently up to and including the OAFPOTUS.

Adam Kinzinger: "[e]liminating a dictator is not the same thing as having a strategy. War is not a television episode. It is not a moment designed to generate a headline or project toughness. War is the most serious decision a nation can make. When the United States uses military force, Americans deserve clarity. They deserve to know why we are fighting, what the objective is, and how the mission ends. Right now, that clarity does not exist. Explain the mission to the American people. Define the objective. Show how success will be measured. And most importantly, demonstrate that there is a plan for what comes after the bombs stop falling."

Julia Ioffe: "Behind the bravado and the patriotic chest-thumping, what, exactly, are we doing? What are America’s goals and how are we achieving them? It’s hard not to find joy in the footage of Iranians celebrating the ayatollah’s death, especially after he had shot so many of them in the streets just over a month ago—but we’ve seen such footage before. We saw it a decade ago in Libya, Tunisia, and Egypt; two decades ago in Iraq and Afghanistan; and three decades ago in Russia: the overwhelming relief that occurs when a people brutalized by a repressive dictator collectively realize that the dictator is gone. It’s a beautiful moment that, in recent history, has proved to be rather fleeting. Life is not a movie, and it keeps going after the villain is vanquished and the credits roll. Without real institutional alternatives—and even with American support—countries can easily descend into civil war or revert back to autocracy. Joy and relief are not antidotes to the darker sides of human nature and the laws of political gravity they create."

Glenn Kessler: "A functioning democracy conducts its foreign policy based on the nation’s interests. That’s because elected representatives, such as a president, should make decisions based on the long-term goals of the country, not the leader’s whims. Foreign policy based on personality is not only damaging to the long-term interests of the United States but also deeply corrupting. When policy depends on presidential moods, the country eventually pays the price."

Brian Beutler: "It’s slightly reductive, though defensible, to suggest Trump attacked Iran to distract from the Epstein files. But it’s quite clear that Trump views setting the news agenda for the country to be politically paramount. Attacking Iran...shakes the snow globe in ways that might make partisan politics less turbulent for him and his allies in Washington. If Democrats understand (as they should) that Republicans view politics as war by other means, they should also be prepared to pre-empt these slanders. If they want Trump and the GOP to suffer politically for this war, they need to understand it, the way Trump does, as a brickbat of domestic politics. Whose Benghazi will this be?"

Josh Marshall: "What strikes me in these poll numbers and my general read of the moment is not so much the opposition to the conflict, though that’s certainly there, as how irrelevant most Americans see this conflict to anything that is happening in the country. You’ve got economic concerns over affordability, health care, the long half life of the shock of the pandemic. You have the domestic political situation, which many Democrats see as an existential battle over the future of democracy and the country itself. MAGA may be thinking about crime, the culture war, mass deportation and more. But neither of these worldviews hold much place for a regime change war against Iran, especially one that seems to be escalating rapidly. Trump might get lucky and hasten the fall of the Iranian government by killing Khamenei. But I don’t get the sense much of the public cares. A clear majority opposes the whole thing. But it doesn’t seem like ingrained anti-war sentiment, the kind of thing that will bring people into the streets, at least not now. It reads more like a grand 'what the F is this about.'"

In other news of a perhaps ill-advised decision, albeit one that will be much easier to change, the government of British Columbia will start permanent daylight saving time on Sunday, with 93% of 223,000 people surveyed agreeing with the change. Let's check in with them at the end of the year when the sun rises at 9:07 am in December.

Yes, there are other things going on in the world (ugh!). Let's just take a moment to reflect that we (in the northern hemisphere, anyway) got through another winter.

More posting later today.

The US and Israel have launched "major military operations" in Iran, with no strategic clarity or stated rationale other than "regime change:"

The attack on Iran came hours after Trump said he was “not happy” about the latest negotiations with Iran over its nuclear programme.

Both the US and Israel called for regime change in Iran and urged a popular uprising after Saturday’s attacks.

Trump called on the Iranian people to “take over your government” in a video on his Truth Social platform. He offered the Iranian military “immunity” should they surrender, or “certain death” if not, and told Iranians the “hour of your freedom is at hand”, urging them to rise up and “take over your government”.

By late morning the scale of Iran’s attacks across the Middle East was becoming clear, as its Revolutionary Guards commanders insisted there were no red lines and no targets off limits. Explosions heard in Bahrain, Abu Dhabi and Kuwait suggested Iran had activated its plan to try to hit as many US bases in the region as possible. Iran said warnings had been given to the Gulf states’ leaders explicitly in the past and that no one should be surprised by what was to come. The UAE and Kuwait closed their airspace.

Andrew Sullivan called bullshit on this operation before it began, postulating that the actual rationale for this geopolitical own-goal is that Israeli Prime Minister Binyamin Netanyahu knows it's the last time the US will support him:

I listened to Tucker Carlson’s interview with Mike Huckabee this week and was struck as much by Huckabee’s flailing as Tucker’s excesses. Huckabee came off as the Israeli ambassador to the US, not the other way round. He held assumptions that would sound insane to anyone under 40 not steeped in generations of bizarre, evangelical Israel-fetishism.

He had zero explanation, for example, for why he would host a vile American traitor, Jonathan Pollard, in the US embassy. I mean: WTF? Huckabee barely acknowledged the existence of Palestinians at all. And he basically argued that the Old Testament is the dispositive guide to US foreign policy, and that Israeli expansion as far as Iraq (!) remains a divine right the US affirms. Dangerous lunacy — but then you realize the opposition leader in Israel, Yair Lapid, agrees, and you begin to see the scale of the problem.

All of which leads to one obvious conclusion. The only reason we may be on the brink of war is because Netanyahu knows this could be his last chance to leverage the might of the United States for his own ends: unchallenged Israeli supremacy in the region alongside more aggressive ethnic cleansing at home.

This is, in other words, the last chance for the tail to wag the dog. Get ready for the fallout.

The governments of Israel and the United States are creating the conditions for the complete ostracism of Israel from the West. Combine that with vast numbers of people who hold Jews outside of Israel responsible for the country's government, which makes about as much sense as holding your local Catholic parishioners responsible for the Crusades. Michelle Goldberg, writing yesterday, lays out the problem:

By aligning Zionism with American authoritarianism, Israel’s champions earned the country the enmity of many Democratic partisans. The influential resistance podcaster Jennifer Welch is indicative. A wealthy interior designer from Oklahoma, she was once a Hillary Clinton-supporting Democrat who backed Israel without thinking much about it. But more recently, she told Zeteo’s Mehdi Hasan, she’s come to link the pro-Israel lobby with the forces destroying American democracy. “My husband always said, ‘I don’t know what’s going on in Israel and Palestine, but I just know every politician I hate supports Israel,” she said, using an obscenity.

Netanyahu and his government deserve this growing bipartisan opprobrium. Unfortunately, ordinary Jews are experiencing it as well. I’ve long argued that anti-Zionism and antisemitism aren’t the same thing. Yet as antisemitism rises in the United States, contempt for Israel sometimes gives way to anti-Jewish paranoia and hostility. Carlson doesn’t just disparage Israel; he also hosts white nationalists and Holocaust deniers. And just this week, Uygur’s “Young Turks" colleague Ana Kasparian indulged in an antisemitic outburst on X, writing, “The goyim are waking up. Deal with it.” (She used an obscenity I’m not allowed to repeat here.) Kasparian refused to apologize, insisting that she was merely deploring Israel, even though “goyim” is a Yiddish word for non-Jews, not non-Zionists.

No one is to blame for Kasparian’s bigotry but herself. But Israel, by behaving appallingly and then trying to silence any condemnation of its appalling behavior as antisemitic, gives ammunition to Jew haters.

And now, we've attacked yet another Muslim West Asian country. That's never gone badly before, has it?

Question for those who voted for the OAFPOTUS (even if you're lying to pollsters about it now): how's the "Peace President" who "stopped 86 wars in just three days" working out?

I promised to include former White House speech writer James Fallows' reaction to Tuesday night's Klan rally State of the Union address. Suffice it to say, Fallows was not pleased:

I didn’t write about Donald Trump’s performance at the Capitol on Tuesday night, because I couldn’t stand to watch the whole thing. I turned it off when it still had 45 minutes to go. For context: All presidents from Richard Nixon through the first George Bush kept their SOTU addresses near or below 45 minutes, total. One of Nixon’s lasted only 28 minutes. One of Reagan’s, just 31. Two of Carter’s, just 32 each.

And I didn’t write about it yesterday, after going back to watch those last, lost 45 minutes, because after doing so I thought: This is too horrible to deal with.

This speech was racist, full of lies, narcissistic, divisive, and so on. The examples and details have been reported everywhere. By now, all of that is baked in.

The difference this time is that the speech was boring.

Everything’s a prop. And everyone is a player in the Trump show.

Donald Trump called out two brave veterans—one still young, one 100 years old—for their in-uniform sacrifice. (That of the young one involved multiple references to “gushing blood” and “blood running down the aisles” of his military helicopter.) In a step never before seen at a SOTU, he awarded them the highest military recognition, the [Congressional] Medal of Honor, on camera as part of his show.

No lies there. I'm glad Fallows was finally able to stomach the whole thing.

In his latest post, satirist Jeff Maurer argues that the Democrats in Congress walked into a trap "genitals first" when the OAFPOTUS "invited" Congress to stand in support of a fairly basic premise of national sovereignty. (My representative, Mike Quigley, skipped the speech.) The OAFPOTUS counted on the Democrats remaining seated, and he was right. As Maurer says,

Obviously your “first job” as an elected official is to represent the people who elected you, not anyone else. The statement is true if you put any other group in the sentence: “The first duty of the American government is to protect American citizens, not Austrians.” Yes, of course — Austrians have their own weird, lederhosen-wearing government to protect them, the American government is for Americans. Plus, if Democrats had stood up, Trump would have been screwed — it would have been like the Louis CK joke about the “do you like apples” scene in Good Will Hunting.

Presented with an opportunity to literally stand up for the American people and leave Trump with his dick twisting in the wind, congressional Democrats instead provided a snippet for Republican attack ads this fall.

I think Maurer rather oversells it, as the only people who will care one way or the other about that moment 90 minutes into an already horrible speech are the people like Maurer and me who already care about it.

But Maurer makes another point, which I think is much more important:

[A]s much as people see extreme right-wingers as overzealous bastards, they’re at least overzealous bastards for the in-group. It’s the old “he’s a son of a bitch, but he’s our son of a bitch” thing. To the extent that Trump is more Chieftain than president, he’s unquestionably a Chieftain for the American tribe. And therefore people sometime dislike his methods but broadly see his goals as directionally correct.

Extremists on the left, though, are seen as siding with the out-group. And that’s because…well, because they often do side with the out-group. There’s a long history of far leftists siding with the Soviet Union, Cuba, or — more recently — Hamas. There is currently a dialogue on Twitter about a crazy woman taking a dump on a New York subway train, and a small number of people are siding with the subway pooper — some folks will do anything except take society’s side. This orientation is rightly seen as anti-social, and it’s hard to win people over when your message to those people is “you are the absolute worst."

... [I]f Democrats think that Republicans’ mindless tribalism means they can engage in mindless tribalism of their own, I think they should think a little harder about who makes up the “tribe” in question.

I don't know if he's right, but I believe he might be. And I don't know if his prescription is right, either, but I believe it might not be.

This primary season will be interesting, that's for sure. Will we field candidates who voters will actually elect? We have a once-in-a-generation opportunity here. I will be furious if we squander it.

Copyright ©2026 Inner Drive Technology. Privacy. Donate!